Wednesday Seminars | Department of Computer Science | University of Cambridge

Geometric Gaussian Processes

Viacheslav (Slava) Borovitskiy

https://vab.im

Outline

Background:

Probabilistic models

- Motivation: Model-based decision-making

- Basic types of probabilistic models

- Probabilistic models on geometric domains

- Motivating geometric Gaussian processes: A benchmark

Geometric Gaussian processes

- Theory

- Various geometric settings

- Implementation

Summary

Probabilistic models

Motivation:

Model-based decision-making

Model-based decision-making

Probabilistic models: input ⟶ distribution (prediction + uncertainty)

Allows computing queries like probability of improvement: $\!\alpha(x) \!=\! \mathbb{P} \! \del{f(x) \!>\!\! f^*}$

They can drive selection of the next point to evaluate [Bayesian optimization]

Basic types of probabilistic models

Basic types of probabilistic models

Bayesian neural networks

Deep ensembles

$\underbrace{\hphantom{\text{Bayesian Neural Networks}\qquad{Deep ensembles}}}_{\text{defined by architectures}}$

Gaussian processes

$\underbrace{\hphantom{\text{Gaussian Processes}}}_{\text{defined by kernels}}$

Basic types of probabilistic models: Discussion

Gaussian processes (GPs) — gold standard.

In practice: General-purpose Gaussian processes (RBF and Matérn kernels).

-

Inductive bias:

- Stationarity: training on a shifted data produces a shifted model.

- Smoothness: continuity and differentiability.

- Scope:

- Work very well in low dimensions (e.g. tabular data).

-

Struggle in high dimensions (e.g. image / text data).

Though deep ensembles and Bayesian neural networks struggle too!

Geometric GPs: extend scope to geometric settings.

Probabilistic models

on

Geometric domains

Geometric domains

Manifolds

e.g. for robotics

(analytic or meshes)

Graphs

e.g. for road networks

(graphs: transductive)

Spaces of graphs

e.g. for drug design

(graphs: inductive)

Example graph learning problem

Example graph learning problem

: Node regression

$$ f : V \to \R $$

$$ f\Big(\smash{\includegraphics[height=2.75em,width=1.5em]{figures/g1.svg}}\Big) = 2 $$

$$ f\Big(\smash{\includegraphics[height=2.75em,width=1.5em]{figures/g2.svg}}\Big) = 3 $$

$$ f\Big(\smash{\includegraphics[height=2.75em,width=1.5em]{figures/g3.svg}}\Big) = 5 $$

Example graph learning problem: Probabilistic node regression

$$ f : V \to \text{distributions over } \R $$

$$ f\Big(\smash{\includegraphics[height=2.75em,width=1.5em]{figures/g1.svg}}\Big) = \f{N}(2, 0.5^2) $$

$$ f\Big(\smash{\includegraphics[height=2.75em,width=1.5em]{figures/g2.svg}}\Big) = \f{N}(3, 0.2^2) $$

$$ f\Big(\smash{\includegraphics[height=2.75em,width=1.5em]{figures/g3.svg}}\Big) = \f{N}(5, 0.7^2) $$

Benchmark

Reminder: Basic types of probabilistic models

Bayesian neural networks

Deep ensembles

$\underbrace{\hphantom{\text{Bayesian Neural Networks}\qquad{Deep ensembles}}}_{\text{defined by architectures}}$

Gaussian processes

$\underbrace{\hphantom{\text{Gaussian Processes}}}_{\text{defined by kernels}}$

Benchmark: Data

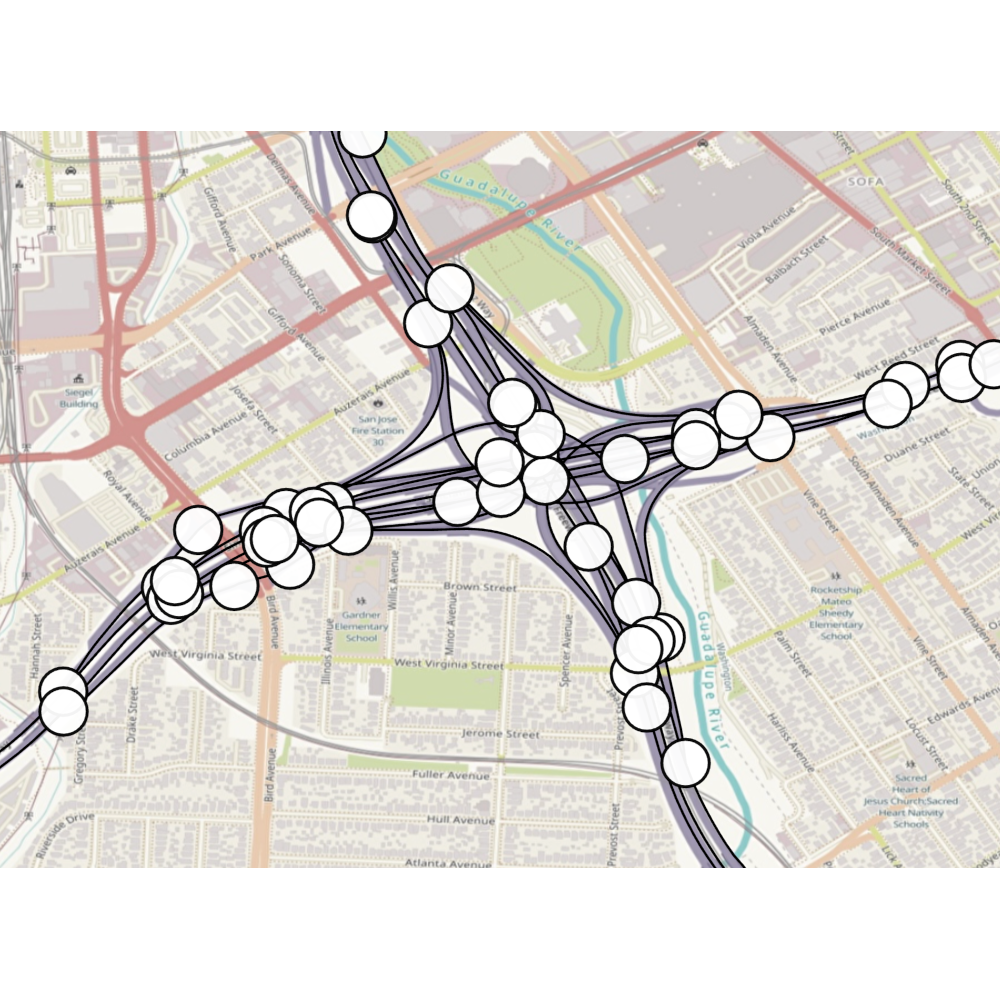

Benchmark: Caltrans Performance Measurement System (PeMS)

San Jose highway network: graph with 1016 nodes

325 labeled nodes with known traffic speed in miles per hour

Use 250 labeled nodes for training data and 75 for test data

Dataset details: Borovitskiy et al. (AISTATS 2021)

Benchmark: Results

Trivial baseline

Trivial baseline

: prediction

: uncertainty

Results

Results

Figure: Uncertainty estimates of a geometric Gaussian process.

This benchmark: geometric GP is best, GNN ensemble fails.

Geometric Gaussian processes

Background: Gaussian processes (GPs)

Gaussian processes — random functions with jointly Gaussian marginals.

Bayesian learning: prior GP + data = posterior GP

Prior GPs are determined by kernels (covariance functions)

— have to be positive semi-definite (PSD),

— not all kernels define "good" GPs.

Background: General-purpose GPs in $\R^d$

Matérn kernels:

$$ \htmlData{class=fragment fade-out,fragment-index=6}{ \footnotesize \mathclap{ k_{\nu, \kappa, \sigma^2}(x,x') = \sigma^2 \frac{2^{1-\nu}}{\Gamma(\nu)} \del{\sqrt{2\nu} \frac{\abs{x-x'}}{\kappa}}^\nu K_\nu \del{\sqrt{2\nu} \frac{\abs{x-x'}}{\kappa}} } } \htmlData{class=fragment d-print-none,fragment-index=6}{ \footnotesize \mathclap{ k_{\infty, \kappa, \sigma^2}(x,x') = \sigma^2 \exp\del{-\frac{\abs{x-x'}^2}{2\kappa^2}} } } $$

$\sigma^2$: variance

$\kappa$: length scale

$\nu$: smoothness

$\nu\to\infty$: RBF kernel (Gaussian, heat, diffusion)

$\nu = 1/2$

$\nu = 3/2$

$\nu = 5/2$

$\nu = \infty$

Can we generalize these to geometric domains?

Distance-based approach

$$ k_{\infty, \kappa, \sigma^2}(x,x') = \sigma^2\exp\del{-\frac{|x - x'|^2}{2\kappa^2}} $$

$$ k_{\infty, \kappa, \sigma^2}^{(d)}(x,x') = \sigma^2\exp\del{-\frac{d(x,x')^2}{2\kappa^2}} $$

Graph nodes: not PSD for some $\kappa$ unless can be isometrically embedded into a Hilbert space.

Schoenberg (Trans. Am. Math. Soc. 1938).

Manifolds: not PSD for some $\kappa$ (in some cases—for all) unless the manifold is isometric to $\mathbb{R}^d$.

Feragen et al. (CVPR 2015) and Da Costa et al. (SIMODS 2025).

Not PSD on the square graph.

Not PSD on the circle manifold.

Another approach: stochastic partial differential equations

$$ \htmlData{class=fragment,fragment-index=0}{ \ubr{\del{\frac{2\nu}{\kappa^2} - \Delta}^{\frac{\nu}{2}+\frac{d}{4}} f = \c{W}}{\t{Matérn}} } $$

$\Delta$: Laplacian

$\c{W}$: Gaussian white noise

$d$: dimension

Generalizes well

Same inductive biases: stationary and smooth

Implicit: no formula for the kernel

Whittle (ISI 1963)

Lindgren et al. (JRSSB 2011)

Making it explicit:

node sets of graphs

Matérn kernels on weighted undirected graphs

SPDE turns into a stochastic linear system: solution has kernel $$ \htmlData{fragment-index=2,class=fragment}{ k_{\nu, \kappa, \sigma^2}(i, j) = \frac{\sigma^2}{C_{\nu, \kappa}} \sum_{n=0}^{\abs{V}-1} \Phi_{\nu, \kappa}(\lambda_n) \v{f_n}(i)\v{f_n}(j) } $$

$\lambda_n, \v{f_n}$: eigenvalues and eigenvectors of the Laplacian matrix

$$ \htmlData{fragment-index=4,class=fragment}{ \Phi_{\nu, \kappa}(\lambda) = \begin{cases} \htmlData{fragment-index=5,class=fragment}{ \del{\frac{2\nu}{\kappa^2} - \lambda}^{-\nu} } & \htmlData{fragment-index=5,class=fragment}{ \nu\lt\infty : \t{Matérn} } \\ \htmlData{fragment-index=6,class=fragment}{ e^{-\frac{\kappa^2}{2} \lambda} } & \htmlData{fragment-index=6,class=fragment}{ \nu = \infty : \t{heat (sq. exp., RBF)} } \end{cases} } $$

Examples: $k_{5/2}(\htmlStyle{color:rgb(242, 249, 88)!important;}{\bullet},\.)$ on node sets of different graphs

Making it explicit:

Other settings

Making it explicit: Other settings

Compact manifolds: $$ \htmlData{class=fragment}{ \begin{aligned} k_{\nu, \kappa, \sigma^2}(x,x') &= \frac{\sigma^2}{C_{\nu, \kappa}} \sum_{n=0}^\infty \Phi_{\nu, \kappa}(\lambda_n) f_n(x) f_n(x') \\ &= \frac{\sigma^2}{C_{\nu, \kappa}} \sum_{l=0}^\infty \Phi_{\nu, \kappa}(\lambda_l) \underbrace{\sum_{s=1}^{d_s} f_{l, s}(x) f_{l, s}(x')}_{G_l(x, x')} \end{aligned} } $$

Infinite summation where $\lambda_n, f_n$ are Laplace–Beltrami eigenpairs

Addition theorems: more efficient $G_l(x, x')$-based expressions

Making it explicit: Other settings

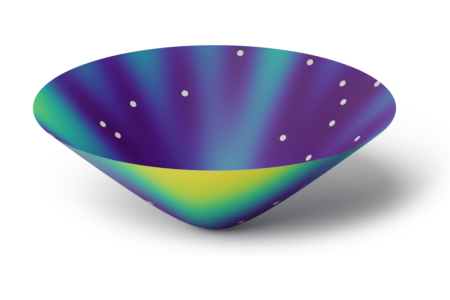

Non-compact manifolds:

- No summation: instead, integral approximated via Monte Carlo

Spaces of graphs:

- Geometry: make a set of graphs to be a node set of a larger graph

- Then, heat and Matérn kernels are automatically defined

- Larger graph is huge: need clever computational techniques

Gaussian processes:

Various geometric settings

Gaussian processes:

Various geometric settings

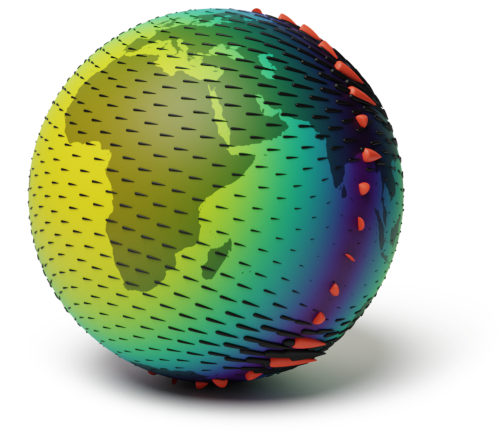

Simple manifolds:

meshes, spheres, tori

Borovitskiy et al. (AISTATS 2020)

Compact Lie groups and

homogeneous spaces:

$\small\f{SO}(n)$, resp. $\small\f{Gr}(n, r)$

Azangulov et al. (JMLR 2024)

Non-compact symmetric

spaces: $\small\bb{H}_n$ and $\small\f{SPD}(n)$

Azangulov et al. (JMLR 2024)

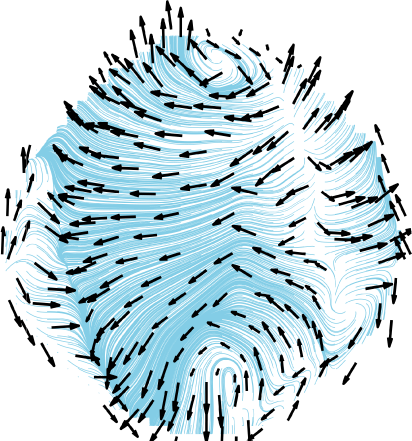

Gaussian processes: Various geometric settings

Vector fields on simple manifolds

Robert-Nicoud et al. (AISTATS 2024)

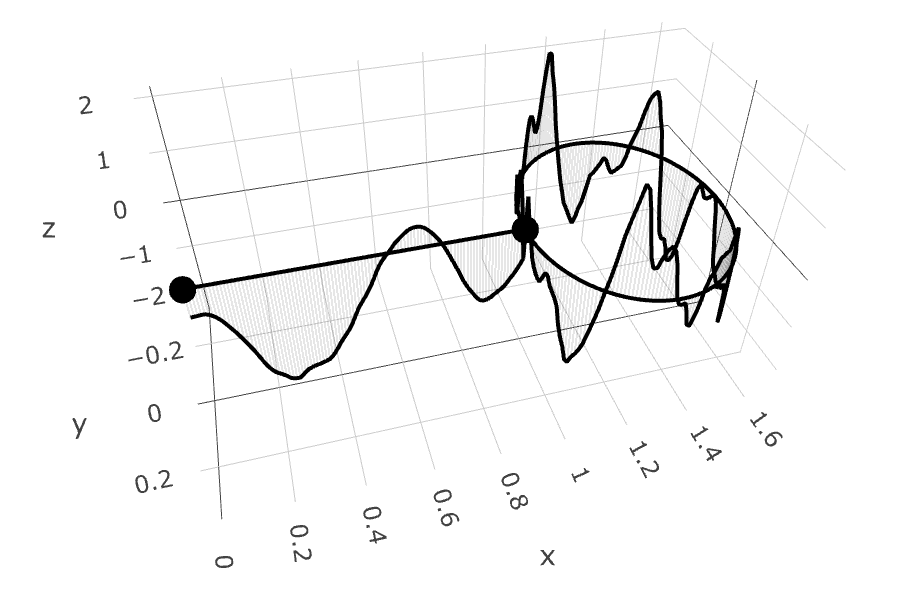

Gaussian processes: Various geometric settings

Edges of graphs

Yang et al. (AISTATS 2024)

Metric graphs

Bolin et al. (Bernoulli 2024)

Cellular Complexes

Alain et al. (ICML 2024)

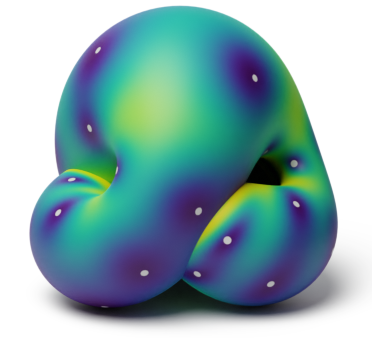

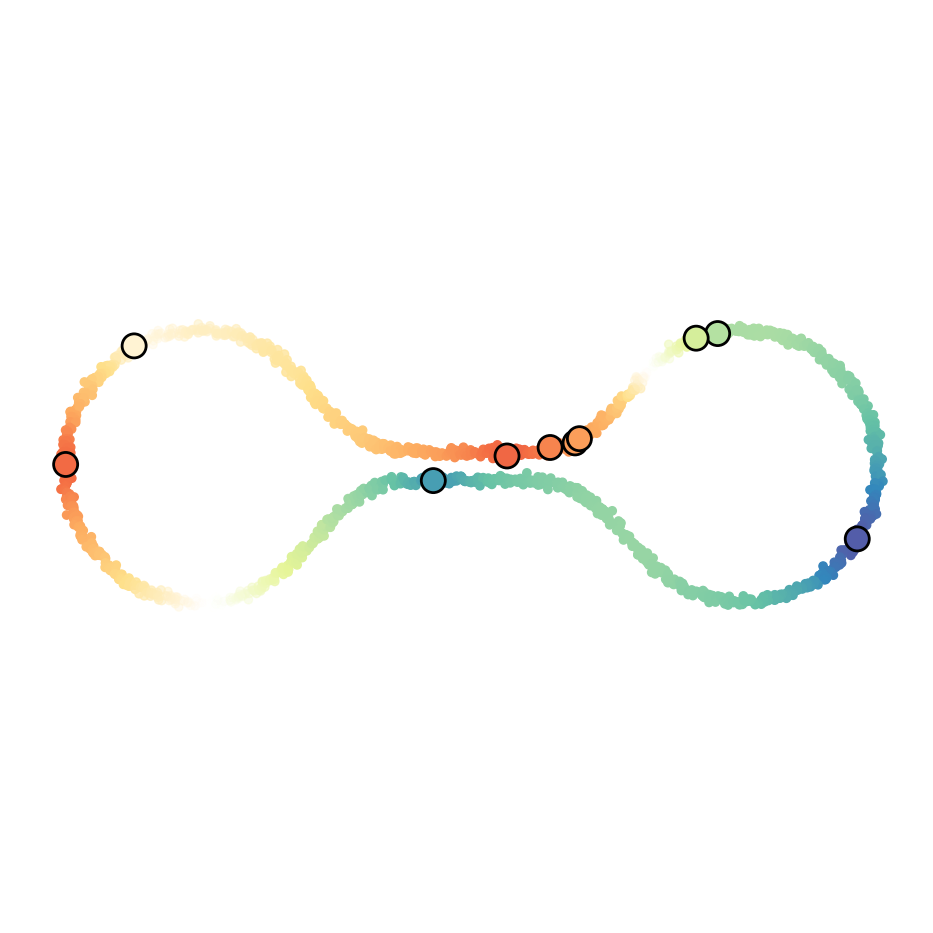

Spaces of Graphs

Borovitskiy et al. (AISTATS 2023)

Gaussian processes: Various geometric settings

Semi-supervised learning:

scalar functions

Fichera et al. (NeurIPS 2023)

Semi-supervised learning:

vector fields

Peach et al. (ICLR 2024)

Implementation

Implementation

https://geometric-kernels.github.io/

Implementation: Details

Supported spaces:

Supported backends: PyTorch, JAX, TensorFlow, NumPy.

Summary

Summary

Geometric machine learning: increasingly popular

- Waves: kernels on structured data, geometric deep learning

Geometric probabilistic models: emerging research area

- Geometric probabilistic > geometric + probabilistic

- Geometric Gaussian processes: strong off-the-shelf baselines

Thank you!

https://vab.im/

Thank you!

https://vab.im/