Geometric Probabilistic Models

- Three types of probabilistic models: an example problem

- Deep dive: geometric Gaussian processes

- Emerging applications

- Software

- Summary

References

Basic types of probabilistic models

Basic types of probabilistic models

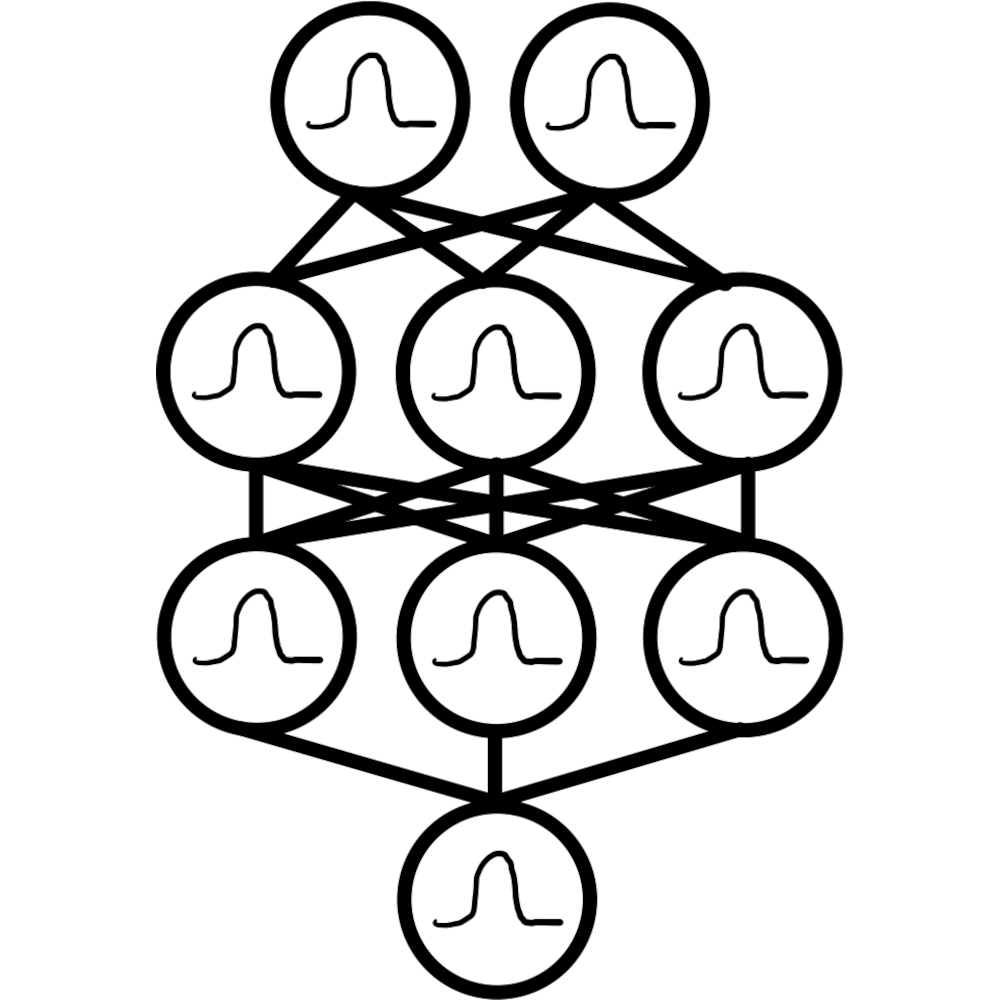

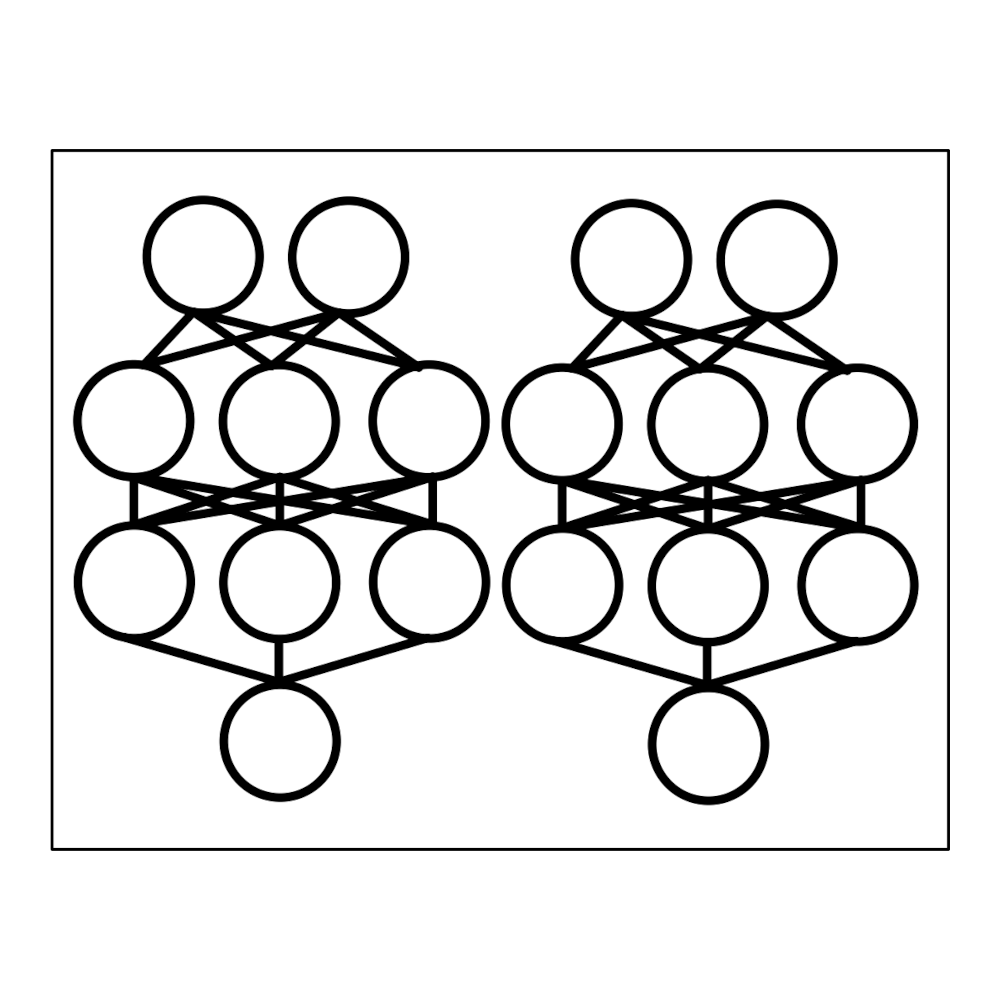

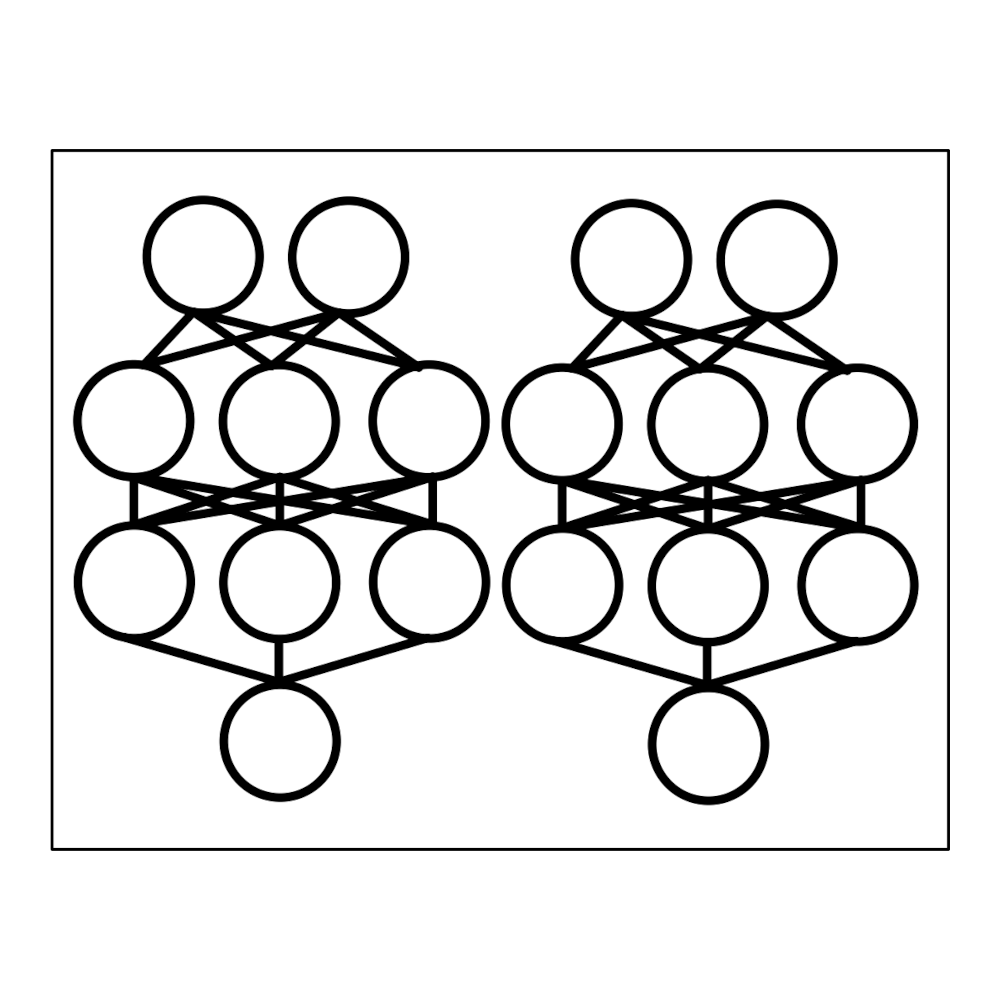

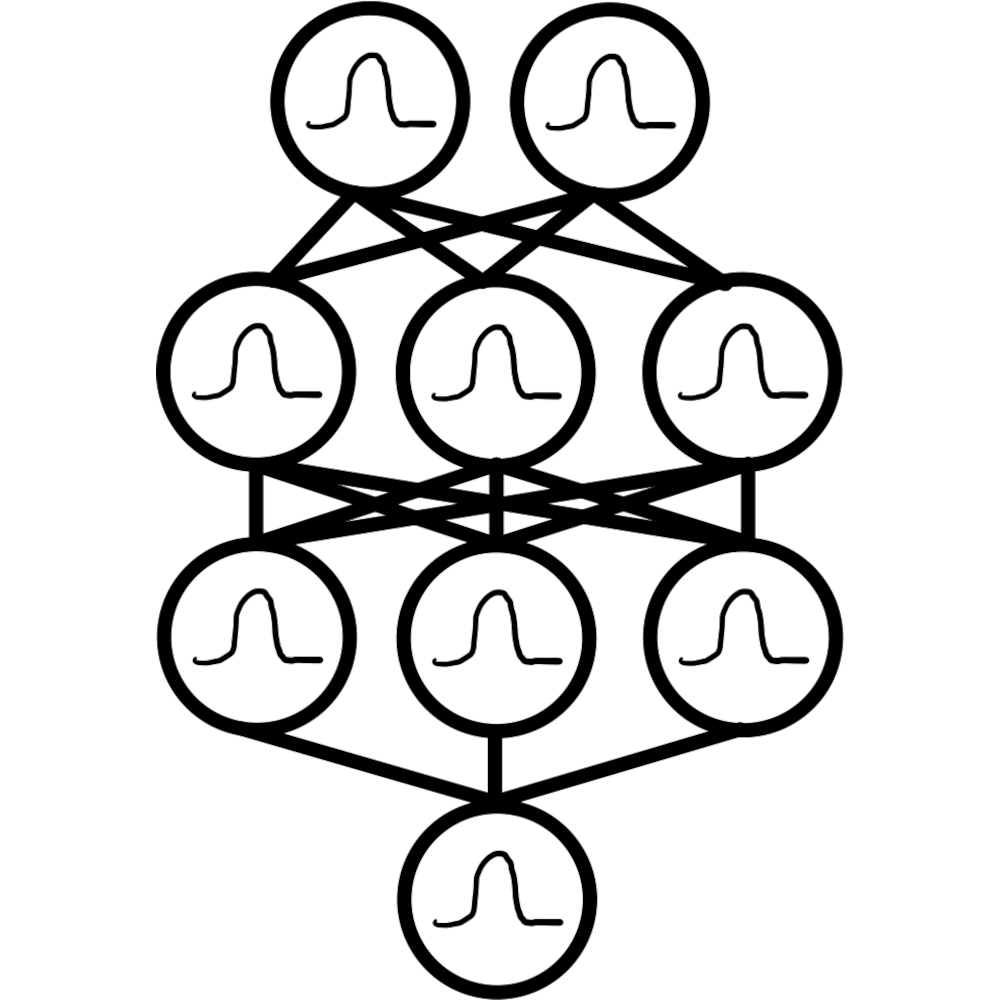

Bayesian Neural Networks

Deep Ensembles

$\underbrace{\hphantom{\text{Bayesian Neural Networks}\qquad{Deep Ensembles}}}_{\text{require specialized architectures}}$

Gaussian Processes

$\underbrace{\hphantom{\text{Gaussian Processes}}}_{\text{require specialized kernels}}$

An example problem

An example problem

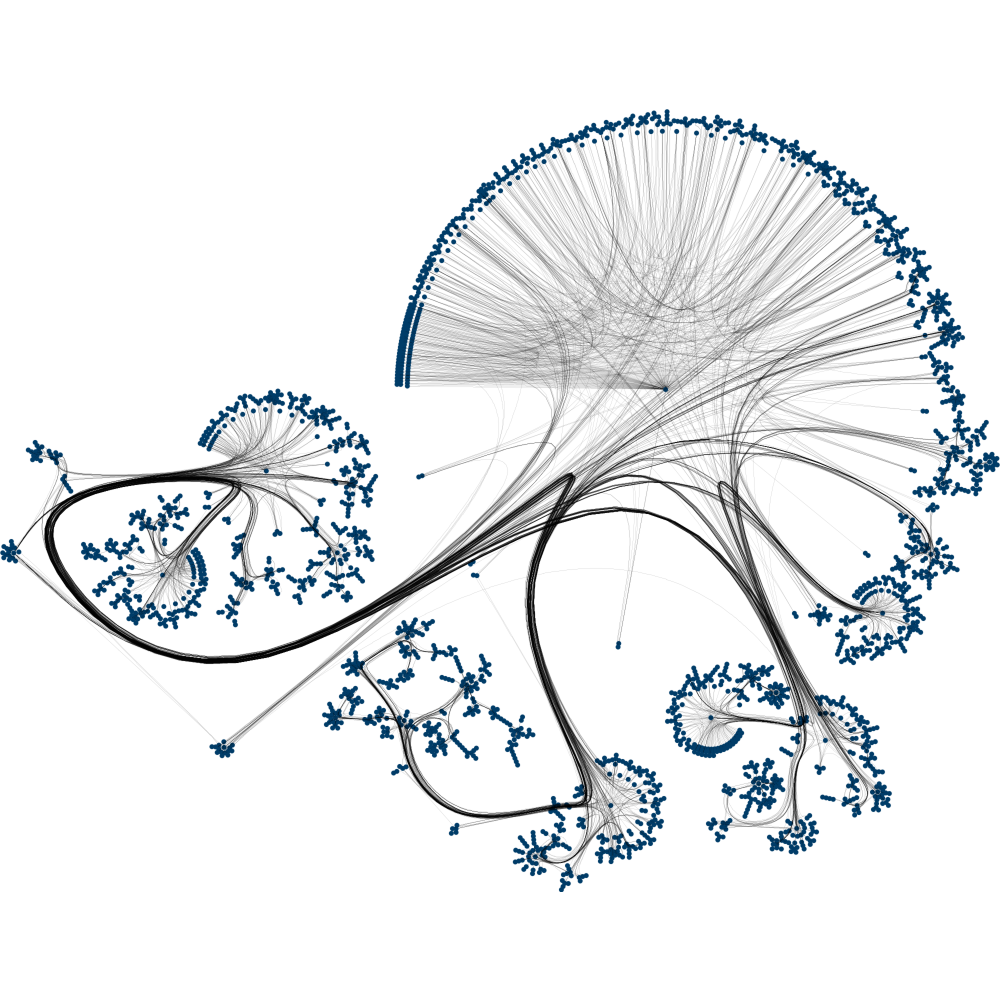

San Jose highway network: graph with 1016 nodes

325 labeled nodes with known traffic speed in miles per hour

Use 250 labeled nodes for training data and 75 for test data

Dataset details: Borovitskiy et al. (AISTATS 2021)

Simple baseline

Simple baseline

: prediction

: uncertainty

Trying out different probabilistic models

Trying out different probabilistic models

Link to code at the end of the talk

Sanity Check:

Deterministic Graph CNN

Deterministic Graph CNN

: prediction (1 layer)

: prediction (7 layers)

Probabilistic models

Deep Ensemble

Deep Ensemble

: prediction

: uncertainty

Bayesian Graph CNN

Bayesian Graph CNN

: prediction (100 neurons/layer)

: uncertainty (100 neurons/layer)

: prediction (10 neurons/layer)

: uncertainty (10 neurons/layer)

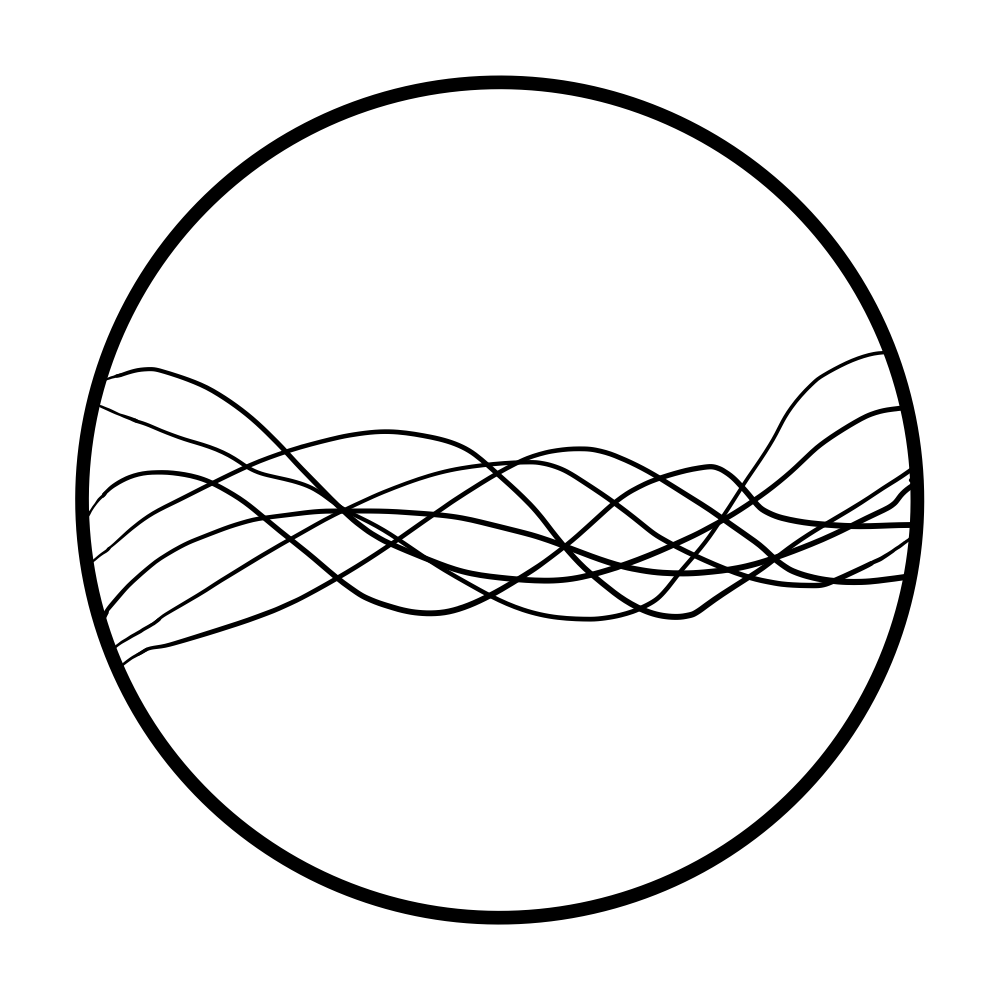

Gaussian Process

Gaussian Process

: geometric Matérn kernel

– prediction

– uncertainty

Not all Gaussian processes

work well

A way to think about this

A way to think about this

Geometric Gaussian processes:

- Reliable off-the-shelf probabilistic models

- Solid baselines to benchmark other models against

This example: easy-to-use and visualize benchmark for regression on geometric data

Deep Dive:

Geometric Gaussian Processes

Geometric (non-Euclidean) domains

Graphs

road networks

Manifolds

physics, robotics

Spaces of graphs

drug design

Standard kernels in $\bb{R}^n$

Matérn kernels

$$ \htmlData{class=fragment fade-out,fragment-index=6}{ \footnotesize \mathclap{ k_{\nu, \kappa, \sigma^2}(x,x') = \sigma^2 \frac{2^{1-\nu}}{\Gamma(\nu)} \del{\sqrt{2\nu} \frac{\abs{x-x'}}{\kappa}}^\nu K_\nu \del{\sqrt{2\nu} \frac{\abs{x-x'}}{\kappa}} } } \htmlData{class=fragment d-print-none,fragment-index=6}{ \footnotesize \mathclap{ k_{\infty, \kappa, \sigma^2}(x,x') = \sigma^2 \exp\del{-\frac{\abs{x-x'}^2}{2\kappa^2}} } } $$

$\sigma^2$: variance

$\kappa$: length scale

$\nu$: smoothness

$\nu\to\infty$: RBF kernel (Gaussian, heat, diffusion)

$\nu = 1/2$

$\nu = 3/2$

$\nu = 5/2$

$\nu = \infty$

How to generalize these to non-Euclidean settings?

Distance-based approach

$$ k_{\infty, \kappa, \sigma^2}(x,x') = \sigma^2\exp\del{-\frac{|x - x'|^2}{2\kappa^2}} $$

$$ k_{\infty, \kappa, \sigma^2}^{(d)}(x,x') = \sigma^2\exp\del{-\frac{d(x,x')^2}{2\kappa^2}} $$

Geometry-aware, but...

Manifolds: not well-defined unless the manifold is isometric to a Euclidean space

Feragen et al. (CVPR 2015)

Graphs: not well-defined unless nodes can be isometrically embedded into a Hilbert space

Schoenberg (Trans. Am. Math. Soc. 1938)

Spaces of graphs: what is a space of graphs?

Need a different generalization

Another approach: stochastic partial differential equations

$$ \htmlData{class=fragment,fragment-index=0}{ \ubr{\del{\frac{2\nu}{\kappa^2} - \Delta}^{\frac{\nu}{2}+\frac{d}{4}} f = \c{W}}{\t{Matérn}} } $$

$\Delta$: Laplacian

$\c{W}$: Gaussian white noise

$d$: dimension

Generalizes well

Geometry-aware: respects to symmetries of the space

Implicit: no formula for the kernel

Whittle (ISI 1963)

Lindgren et al. (JRSSB 2011)

Making it explicit:

node sets of graphs

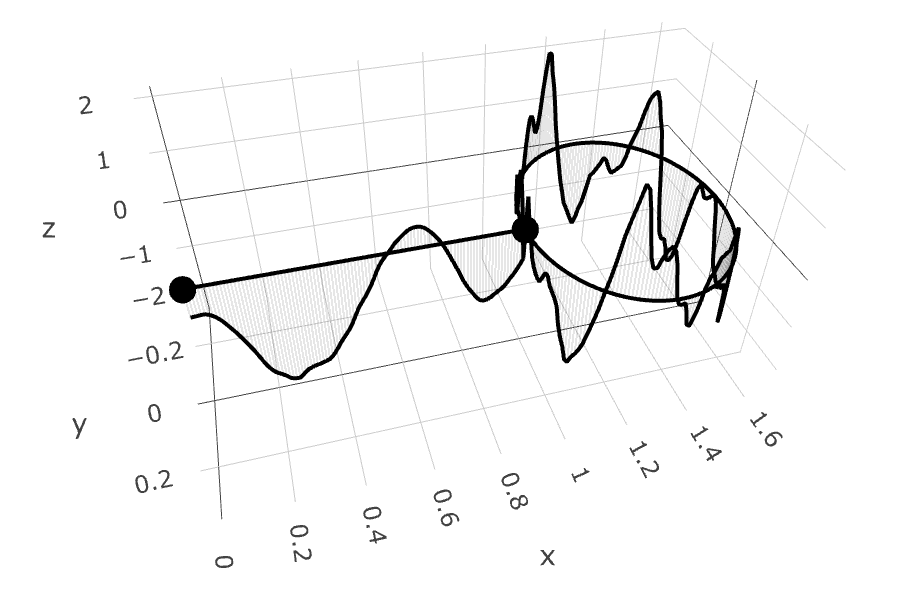

Motivating example: traffic speed modeling

Matérn kernels on weighted undirected graphs

SPDE turns into a stochastic linear system: solution has kernel $$ \htmlData{fragment-index=2,class=fragment}{ k_{\nu, \kappa, \sigma^2}(i, j) = \frac{\sigma^2}{C_{\nu, \kappa}} \sum_{n=0}^{\abs{V}-1} \Phi_{\nu, \kappa}(\lambda_n) \v{f_n}(i)\v{f_n}(j) } $$

$\lambda_n, \v{f_n}$: eigenvalues and eigenvectors of the Laplacian matrix

$$ \htmlData{fragment-index=4,class=fragment}{ \Phi_{\nu, \kappa}(\lambda) = \begin{cases} \htmlData{fragment-index=5,class=fragment}{ \del{\frac{2\nu}{\kappa^2} - \lambda}^{-\nu} } & \htmlData{fragment-index=5,class=fragment}{ \nu\lt\infty : \t{Matérn} } \\ \htmlData{fragment-index=6,class=fragment}{ e^{-\frac{\kappa^2}{2} \lambda} } & \htmlData{fragment-index=6,class=fragment}{ \nu = \infty : \t{heat (sq. exp., RBF)} } \end{cases} } $$

Making it explicit:

other settings

Making it explicit: other settings

Compact manifolds: $$ \htmlData{class=fragment}{ \begin{aligned} k_{\nu, \kappa, \sigma^2}(x,x') &= \frac{\sigma^2}{C_{\nu, \kappa}} \sum_{n=0}^\infty \Phi_{\nu, \kappa}(\lambda_n) f_n(x) f_n(x') \end{aligned} } $$

Infinite summation where $\lambda_n, f_n$ are Laplace–Beltrami eigenpairs

Making it explicit: other settings

Non-compact manifolds:

- No summation: instead, integral approximated via Monte Carlo

Spaces of graphs:

- Geometry: make a set of graphs to be a node set of a larger graph

- Then, heat and Matérn kernels are automatically defined

- Larger graph is huge: need clever computational techniques

Gaussian processes:

geometric settings

Gaussian processes:

geometric settings

Simple manifolds:

meshes, spheres, tori

Borovitskiy et al. (AISTATS 2020)

Compact Lie groups and

homogeneous spaces:

$\small\f{SO}(n)$, resp. $\small\f{Gr}(n, r)$

Azangulov et al. (JMLR 2024)

Non-compact symmetric

spaces: $\small\bb{H}_n$ and $\small\f{SPD}(n)$

Azangulov et al. (JMLR 2024)

Gaussian processes: other geometric settings

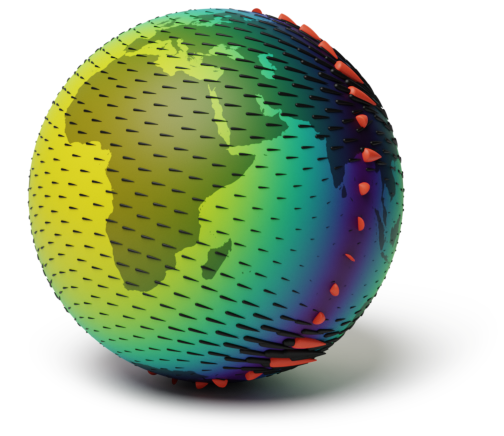

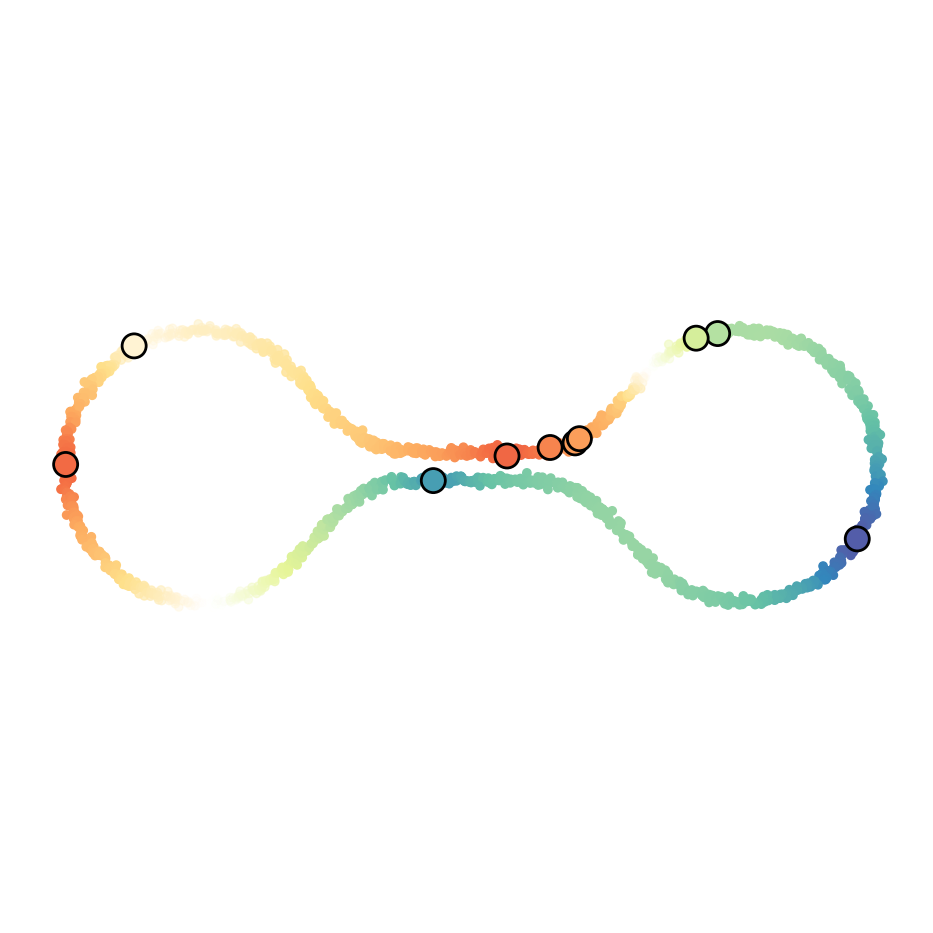

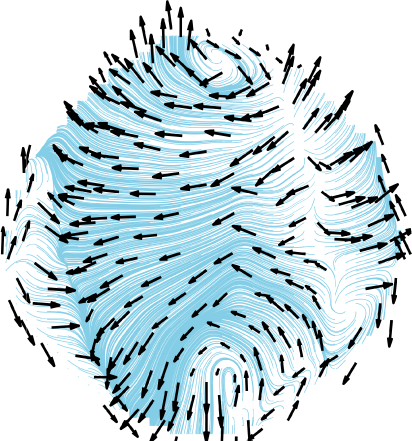

Vector fields on simple manifolds

Robert-Nicoud et al. (AISTATS 2024)

Gaussian processes: other geometric settings

Edges of graphs

Yang et al. (AISTATS 2024)

Metric graphs

Bolin et al. (Bernoulli 2024)

Cellular Complexes

Alain et al. (ICML 2024)

Spaces of Graphs

Borovitskiy et al. (AISTATS 2023)

Implicit geometry

Semi-supervised learning:

scalar functions

Fichera et al. (NeurIPS 2023)

Semi-supervised learning:

vector fields

Peach et al. (ICLR 2024)

Theoretical results

Theoretical results

Hyperparameter inference

Li et al. (JMLR 2024)

Minimax errors

Rosa et al. (NeurIPS 2023)

Emerging real-world applications

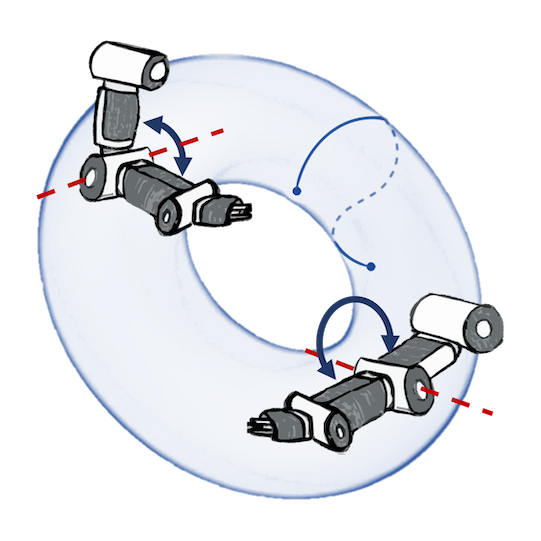

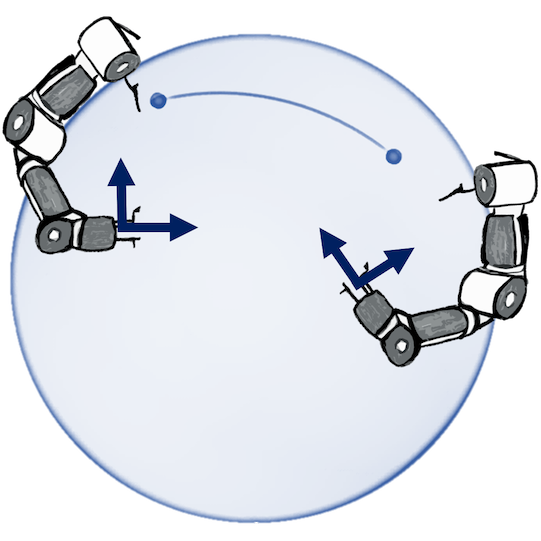

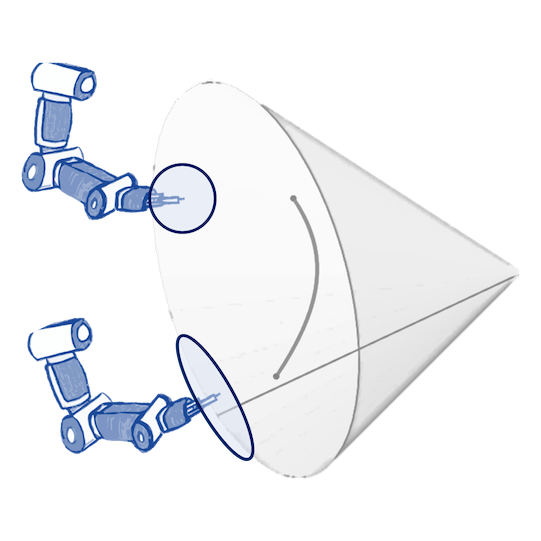

Robotics: Bayesian optimization of control policies

Joint postures: $\mathbb{T}^d$

Rotations: $\mathbb{S}_3$, $\operatorname{SO}(3)$

Manipulability: $\operatorname{SPD(3)}$

Jaquier et al. (CoRL 2019, CoRL 2021), figures by the authors

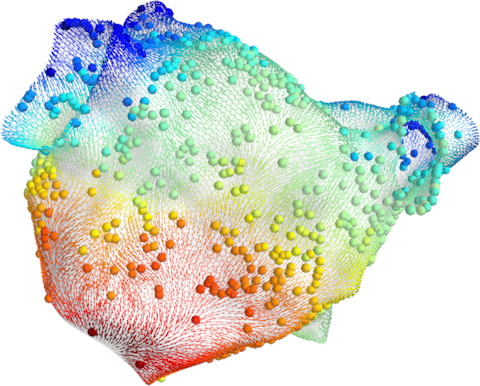

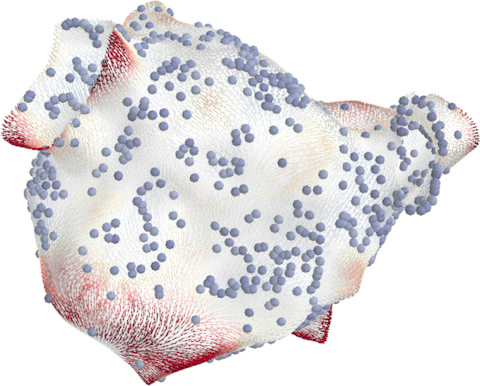

Medicine: probabilistic atrial activation maps

Prediction

Uncertainty

Matérn Gaussian processes on meshes (independently proposed)

Coveney et al. (TBE 2019, PTRSA 2020), figures by the authors

Software

Example Problem: Code

Stack:

- PyTorch Geometric: graph neural networks

- Pyro: probabilistic programming

- GPyTorch: Gaussian processes

- GeometricKernels: geometric Matérn kernels

GeometricKernels: Matérn and heat kernels on geometric domains

https://geometric-kernels.github.io/

Matérn and Heat Kernels on Geometric Domains

Example code:

>>> # Define a space (2-dim sphere).

>>> hypersphere = Hypersphere(dim=2)

>>> # Initialize kernel.

>>> kernel = MaternGeometricKernel(hypersphere)

>>> params = kernel.init_params()

>>> # Compute and print out the 3x3 kernel matrix.

>>> xs = np.array([[0., 0., 1.], [0., 1., 0.], [1., 0., 0.]])

>>> print(kernel.K(params, xs))

[[1. 0.356 0.356]

[0.356 1. 0.356]

[0.356 0.356 1. ]]

Multi-backend: Numpy, JAX, PyTorch, TensorFlow

Summary

Summary

Geometric machine learning: increasingly popular

- Waves: kernels on structured data, geometric deep learning

Geometric probabilistic models: emerging research area

- Geometric Gaussian processes: solid off-the-shelf baselines

- Increasingly available but actively developing: join us!

Thank you!

@vabor112

Thank you!

@vabor112

Slides at:

References

References

V. Borovitskiy, I. Azangulov, A. Terenin, P. Mostowsky, M. P. Deisenroth, and N. Durrande. Matérn Gaussian Processes on Graphs. Artificial Intelligence and Statistics, 2021.

A. Feragen, F. Lauze, and S. Hauberg. Geodesic exponential kernels: When curvature and linearity conflict. Computer Vision and Pattern Recognition, 2015.

I. J. Schoenberg. Metric spaces and positive definite functions. Transactions of the American Mathematical Society, 1938.

P. Whittle. Stochastic-processes in several dimensions. Bulletin of the International Statistical Institute, 1963.

F. Lindgren, H. Rue, and J. Lindström. An explicit link between Gaussian fields and Gaussian Markov random fields: the stochastic partial differential equation approach. Journal of the Royal Statistical Society, Series B: Statistical Methodology, 2011.

V. Borovitskiy, A. Terenin, P. Mostowsky, and M. P. Deisenroth. Matérn Gaussian Processes on Riemannian Manifolds. Advances in Neural Information Processing Systems, 2020.

I. Azangulov, A. Smolensky, A. Terenin, and V. Borovitskiy. Stationary Kernels and Gaussian Processes on Lie Groups and their Homogeneous Spaces I: the compact case. Journal of Machine Learning Research, 2024.

I. Azangulov, A. Smolensky, A. Terenin, and V. Borovitskiy. Stationary Kernels and Gaussian Processes on Lie Groups and their Homogeneous Spaces II: non-compact symmetric spaces, 2024.

D. Robert-Nicoud, A. Krause, and V. Borovitskiy. Intrinsic Gaussian Vector Fields on Manifolds. Artificial Intelligence and Statistics, 2024.

M. Yang, V. Borovitskiy, and E. Isufi. Hodge-Compositional Edge Gaussian Processes. Artificial Intelligence and Statistics, 2024.

D. Bolin, A. B. Simas, and J. Wallin. Gaussian Whittle-Matérn fields on metric graphs. Bernoulli, 2024.

M. Alain, S. Takao, B. Paige, and M. P. Deisenroth. Gaussian Processes on Cellular Complexes. International Conference on Machine Learning, 2024.

References

V. Borovitskiy, M. R. Karimi, V. R. Somnath, and A. Krause. Isotropic Gaussian Processes on Finite Spaces of Graphs. Artificial Intelligence and Statistics, 2023.

B. Fichera, V. Borovitskiy, A. Krause, and A. Billard. Implicit Manifold Gaussian Process Regression. Advances in Neural Information Processing Systems, 2023.

R. Peach, M. Vinao-Carl, N. Grossman, and M. David. Implicit Gaussian process representation of vector fields over arbitrary latent manifolds. International Conference on Learning Representations, 2024.

D. Li, W. Tang, and S. Banerjee. Inference for Gaussian Processes with Matern Covariogram on Compact Riemannian Manifolds. Journal of Machine Learning Research, 2023.

P. Rosa, V. Borovitskiy, A. Terenin, and J. Rousseau. Posterior Contraction Rates for Matérn Gaussian Processes on Riemannian Manifolds. Advances in Neural Information Processing Systems, 2023.

N. Jaquier, V. Borovitskiy, A. Smolensky, A. Terenin, T. Asfour, and L. Rozo. Geometry-aware Bayesian Optimization in Robotics using Riemannian Matérn Kernels. Conference on Robot Learning, 2021.

N. Jaquier, L. Rozo, S. Calinon, and M. Bürger. Bayesian optimization meets Riemannian manifolds in robot learning. Conference on Robot Learning, 2020.

S. Coveney, C. Corrado, C. H. Roney, R. D. Wilkinson, J. E. Oakley, F. Lindgren, S. E. Williams, M. D. O'Neill, S. A. Niederer, and R. H. Clayton. Probabilistic interpolation of uncertain local activation times on human atrial manifolds. IEEE Transactions on Biomedical Engineering, 2019.

S. Coveney, C. Corrado, C. H. Roney, D. OHare, S. E. Williams, M. D. O'Neill, S. A. Niederer, R. H. Clayton, J. E. Oakley, and R. D. Wilkinson. Gaussian process manifold interpolation for probabilistic atrial activation maps and uncertain conduction velocity. Philosophical Transactions of the Royal Society A, 2020.